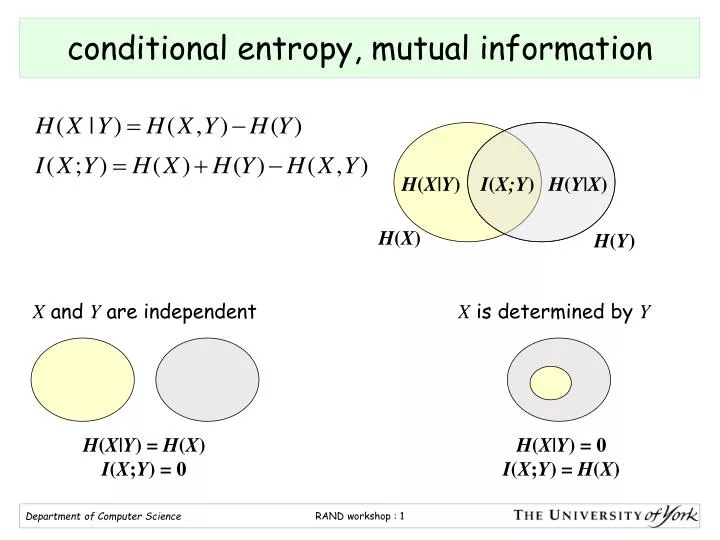

Using the Baysian sum rule p( x y) = p( x∣ y) p( y), one finds that the conditional entropy is equal to H(X|Y) = H(X,Y) - H(Y) with "H(XY)" the joint entropy of "X" and "Y". the expected surprisal) of a coin flip, measured in bits, graphed versus the bias of the coin Pr(X 1), where X 1 represents a result of heads. Given a discrete random variable, which takes values in the alphabet and is distributed according to : where denotes the sum over the variable's possible values.

'Dits' can be converted into Shannon's bits, to get the formulas for conditional entropy, etc. In information theory, the entropy of a random variable is the average level of 'information', 'surprise', or 'uncertainty' inherent to the variable's possible outcomes. Denition The conditional entropy of X given Y is H(XY) X x,y p(x,y)logp(xy) E log(p(xy)) (5) The conditional entropy is a measure of how much uncertainty remains about the random variable X when we know the value of Y. Information is quantified as 'dits' (distinctions), a measure on partitions. The conditional entropy is just the Shannon entropy with p( x∣ y) replacing p( x), and then we average it over all possible "Y". This paper presents a new period-finding method based on conditional entropy that is both efficient and accurate. The joint entropy measures how much uncertainty there is in the two random variables X and Y taken together. In Baysian language, Y represents our prior information information about X. It reveals how much variability remains in y, when x is fixed. Image:classinfo.png If the probability that X = x is denoted by p( x), then we donote by p( x∣ y) the probability that X = x, given that we already know that Y = y. The conditional entropy is the entropy of the conditional probability. Conditional Entropy Conditional Entropy Example: simpli ed Polynesian Use the previous theorem to compute the joint entropy of a consonant and a vowel. What does conditional entropy mean Information and translations of conditional entropy in the most comprehensive dictionary definitions resource on the web. It is referred to as the entropy of X conditional on Y, and is written H( X∣ Y). Theorem: Let X1, X2,Xn be random variables having the mass probability p(. L Thejoint entropyof (jointly distributed) rvs Xand Ywith ( )p, is H(X Y)Q x y p(x y)logp(x y) This is simply the entropy of. The conditional entropy measures how much entropy a random variable X has remaining if we have already learned the value of a second random variable Y. The entropy of a collection of random variables is the sum of conditional entropies. Joint entropy L Recall that the entropy of rv X over X, is dened by H(X)Q xX P X( x)logP X( ) L Shorter notation: for X p, let H( ) x (x)logp(x) (where the summation is over the domain of X).

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed